Hey all,

Hope you are safe and healthy during pandemic.

This post can be very simple for some people, but, it will be useful to another ones.

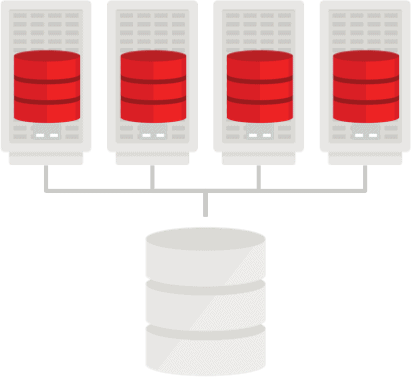

Some weeks ago a client had asked for our support. Their issue was with a cluster node that goes down and stayed unavailable for 3 weeks and during this time (believe in me) the backups for database were not getting generated because they were executing a shell script schedule on Cron only in the node that goes down.

In the majority of the clientes that I have worked for, they had backup tools to manage all the lifecycle for a backup, including scheduling, some tools are: Data Protector, SnapCenter, TSM, Networker, etc can do that in an easy way. For those tools, they need to install an agent in each node that database is running and the tool will trigger the backup, from backup server, sending the requests to agent, in case an agent become unavailable (does not matter if is because OS crashes or even agent that goes down), the tool can trigger the backup sendind the requests to a surviving node.

But, this topology isn’t a reality for some customers, and, a lot of customers can use the old and good Cron to manage backup scheduling, triggering an execution of a shell script, calling RMAN and using some RMAN commands to get backup generated.

For the clients that are still using Cron, the challenge is: how we can guarantee that script will be stored in all servers and also this scheduling can be enabled through Cron in all servers?

To store a script in all servers, a NFS mountpoint can helps, but, some clients can’t have this topology. I already worked for some clients that use of NFS are restricted, even restricting NFS ports on firewall.

Well, so, in this case, a feasible solution is to place a copy of script in all nodes and make sure that if you change something in the script in first node, you will copy the script to all remaining nodes.

But, and about Cron scheduling?

Maybe you had no opportunity to see a blog post here from March/2019, in this blog post I was discussing about a copy of OCR backup to filesystem. In that post, I was explaining that my script will ONLY execute on Cluster Master Node. So, you can keep a copy of your script in all nodes, and Cron scheduling also enabled in all nodes, but only the Master Node will execute the script because in a Cluster architecture, there will exists only one master node at time. You can read the post clicking here.

So, the idea is to use a shell script that will exists in all cluster nodes with Cron scheduling enabled in all cluster nodes. This shell script will check what if the node that triggered the execution is the Master Node. If not, the script will exit, if yes, the script will trigger RMAN calling the backup script (RMAN commands).

You can check script backup_full.sh below:

#!/bin/bash

export GRID_HOME=/u01/app/193/grid

export ORACLE_HOME=/u01/app/oracle/product/19.3.0/dbhome_1

export PATH=${GRID_HOME}/bin:${ORACLE_HOME}/bin:${PATH}

export DT=`date +%m_%d_%Y`

export MASTER_NODE=`oclumon manage -get master | grep Master | awk '{print $3}' | cut -d "." -f1 | sed -e 's/\(.*\)/\U\1/'`

export HOST=`echo ${HOSTNAME} | cut -d "." -f1 |sed -e 's/\(.*\)/\U\1/'`

export WORK_DIR=/u01/app/oracle/scripts

export LOG_DIR=${WORK_DIR}/log

if [ "${HOST} == "${MASTER_NODE}" ]; then

$ORACLE_HOME/bin/rman cmdfile=${WORK_DIR}/backup_full.rman log=${LOG_DIR}/backup_full_${DT}.log

else

exit

fi

So, supping that scripts will be placed into /u01/app/oracle/scripts, Cron scheduling can be configured in all nodes as follows:

0 0 * * 6 /u01/app/oracle/scripts/backup_full.sh 1>/dev/null 2>/dev/null

So, the script /u01/app/oracle/scripts/backup_full.sh will be executed every Saturday at midnight.

This post have no goal to explain the options to backup a RAC database, but, the file backup_full.rman can have the following content (just an idea, OK?):

connect target / backup as compressed backupset database plus archivelog delete input; exit

Hope it helps.

Peace!

![]() Vinicius

Vinicius

Related posts

About

Disclaimer

My postings reflect my own views and do not necessarily represent the views of my employer, Accenture.